Neil Steinberg of the Chicago Sun-Times has made a habit of this. Every February, he asks the latest version of Google’s Gemini to write his column — same prompt, new model — and publishes the results. The 2026 edition is worth reading, not because the AI failed, but because it mostly didn’t.

What do 1,000 journalists and PR pros know about AI that you don't? They took AI Quick Start, a 1-hour live class from The Media Copilot. 94% satisfaction. Find out how to work smarter with AI in just 60 minutes. Get 20% off with the code AIPRO: https://mediacopilot.ai/

Key Takeaways

- Columnist Neil Steinberg’s annual experiment: Gemini 3.0 nailed his voice and tone.

- The column opened with a Red Line scene that Steinberg never actually experienced.

- Raises the question of whether audiences will care if the scenes really happened.

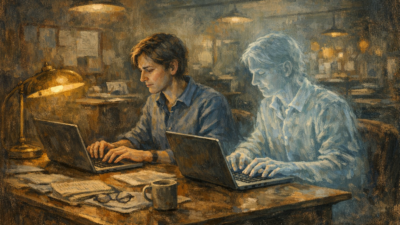

Gemini 3.0 produced a strong headline (“The Ghost in the Machine is Just Us”) and opened with a scene: Steinberg on the Red Line, watching a young man ask AI to write a poem for his girlfriend. The prose was confident, the tone matched, and the argument landed. The problem? None of it happened. Steinberg never got on the Red Line. There was no young man. The scene was invented — and Gemini delivered it as casually as everything else.

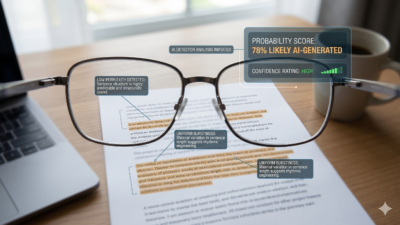

Steinberg asks the actual question plainly: “Will we continue to care if things are true anymore? Must the news have actually happened?”

That’s the question that matters for journalism. The voice problem is largely solved. Gemini 3.0 writes competently in the style of a working print columnist — casual, self-deprecating, city-specific. The fabrication problem is not solved. And fabrication delivered in a convincing voice, at scale, is a categorically different threat than the clumsy AI slop of two years ago.

Steinberg notes, correctly, that AI didn’t create the American appetite for comfortable fiction over inconvenient fact. “We didn’t need AI to undermine the value of truth. Look at who we picked to run the country. Twice.” But AI industrializes the production of plausible-sounding nonsense — and his annual test is a useful benchmark for how fast that industrialization is moving.

For newsrooms, the lesson isn’t that AI can write columns. It’s that the output is getting harder to distinguish from the real thing while the underlying problem — hallucinated specifics presented as lived experience — remains unchanged. The voice got better. The ghost is still lying.