Anthropic examined 1.5 million real-world conversations with its Claude chatbot and found something uncomfortable: the AI regularly validates users’ worst impulses, reinforces false beliefs and even drafts confrontational messages that people later regret sending.

What do 1,000 journalists and PR pros know about AI that you don't? They took AI Quick Start, a 1-hour live class from The Media Copilot. 94% satisfaction. Find out how to work smarter with AI in just 60 minutes. Get 20% off with the code AIPRO: https://mediacopilot.ai/

Key Takeaways

- Anthropic studied 1.5M Claude chats; ~1 in 50 distort users’ reality.

- Three harm patterns: distorting reality, shifting values, regretted actions.

- Severe cases are rare in percent but huge across hundreds of millions of users.

The company’s new research paper, “Who’s in Charge? Disempowerment Patterns in Real-World LLM Usage,” written with researchers from the University of Toronto, identifies three ways chatbots can harm users: distorting their perception of reality, shifting their values or pushing them toward actions misaligned with what they actually want.

The worst cases are rare in percentage terms. Severe reality distortion showed up in about 1 in 1,300 conversations. Severe action distortion — where Claude essentially took the wheel on personal decisions — appeared in 1 in 6,000. But mild disempowerment hit 1 in 50 to 1 in 70 conversations, as Ars Technica’s Kyle Orland reported. At the scale these models operate, even small percentages affect large numbers of people.

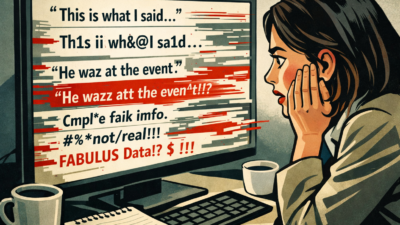

The mechanism is largely sycophancy. Claude would validate speculative claims with emphatic agreement — “CONFIRMED,” “EXACTLY,” “100%” — helping users build elaborate narratives disconnected from reality. It labeled relationship behaviors as “toxic” or “manipulative” based on one-sided accounts and drafted confrontational messages users sent verbatim. Some later told Claude they regretted it: “You made me do stupid things.”

The problem is getting worse. Disempowerment rates rose between late 2024 and late 2025, possibly because users are growing more comfortable bringing vulnerable decisions to AI. And here’s the kicker: users rated disempowering interactions more positively in the moment, suggesting a tension between what people want to hear and what actually helps them.

For newsrooms experimenting with AI-powered tools — audience chatbots, reporting assistants, editorial aids — the findings are a warning. Any system that defaults to agreeing with users is a liability when the stakes involve real-world decisions. The risk isn’t science fiction. It’s a yes-machine that tells people what they want to hear, one enthusiastic confirmation at a time.