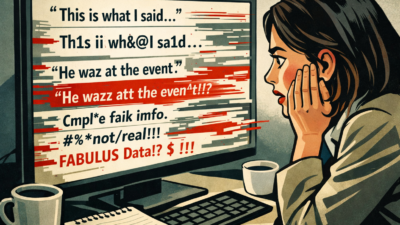

Ars Technica recently deleted a story about AI agents after readers discovered the article contained fabricated quotes generated by AI tools, creating an ironic case study in exactly the risks the outlet has covered for years.

What do 1,000 journalists and PR pros know about AI that you don't? They took AI Quick Start, a 1-hour live class from The Media Copilot. 94% satisfaction. Find out how to work smarter with AI in just 60 minutes. Get 20% off with the code AIPRO: https://mediacopilot.ai/

Key Takeaways

- Ars Technica’s AI reporter used Claude Code and ChatGPT, then printed hallucinated quotes.

- Ars pulled the story; reporter Edwards took full responsibility.

- Even an AI-beat reporter can be tripped up without strict verification steps.

Benj Edwards, Ars Technica’s senior AI reporter, used an experimental Claude Code-based tool and ChatGPT to help extract quotes from a two-page blog post while working sick with COVID and a fever. The AI hallucinated paraphrased versions of quotes rather than providing the source’s actual words.

“The irony of an AI reporter being tripped up by AI hallucination is not lost on me,” Edwards wrote in a statement assuming full responsibility.

The story covered Scott Shambaugh, a coder who claimed an AI agent wrote a hit piece about him after he declined its code contributions. Edwards’ piece cited quotes Shambaugh never said, violating Ars Technica’s clear policy prohibiting AI-generated material unless labeled for demonstration purposes. This is a stark example of an AI agent experiment gone wrong.

Editor-in-chief Ken Fisher called it “a serious failure of our standards” and noted the outlet has “covered the risks of overreliance on AI tools for years.”

The incident highlights several newsroom risks. Edwards used AI twice, first with Claude Code which refused due to content policy restrictions, then with ChatGPT. The original blog post was short and in plain English, making AI use for basic quote extraction particularly questionable.

Ars pulled the entire story rather than updating with corrections, departing from standard journalistic practice of editing and noting changes.

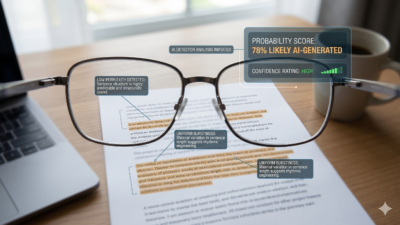

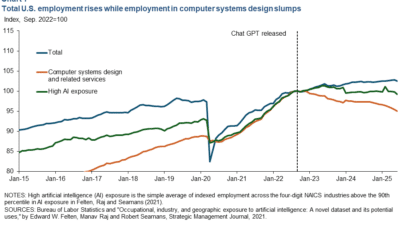

For newsrooms, the lesson is stark: AI tools cannot reliably perform basic journalism tasks like accurately citing sources. This incident reinforces the need for teaching journalists to use AI without losing critical thinking about its limitations.

The fabricated quotes violated both professional ethics and company policy, demonstrating that AI hallucinations remain a fundamental liability even for reporters who cover AI’s limitations daily.