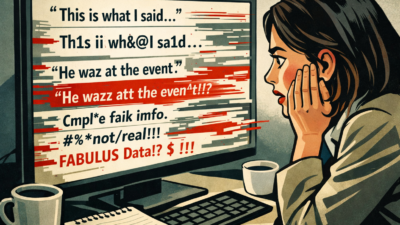

Within hours of Nicolás Maduro’s arrest by U.S. forces, fake AI-generated images of Venezuela’s ousted president spread across social media faster than newsrooms could verify them.

What do 1,000 journalists and PR pros know about AI that you don't? They took AI Quick Start, a 1-hour live class from The Media Copilot. 94% satisfaction. Find out how to work smarter with AI in just 60 minutes. Get 20% off with the code AIPRO: https://mediacopilot.ai/

Key Takeaways

- Fake AI Maduro images went viral before newsrooms could verify them.

- Deepfake speed and volume now exceed traditional fact-checking.

- Newsrooms need AI-assisted verification to keep pace and credibility.

The incident marks one of the first times synthetic imagery has depicted a major figure during a rapidly unfolding news event, according to a New York Times report by Stuart A. Thompson and Tiffany Hsu.

“This was the first time I’d personally seen so many A.I.-generated images of what was supposed to be a real moment in time,” Roberta Braga, executive director of the Digital Democracy Institute of the Americas, told the Times.

Some fake images made it into Latin American news outlets before being quietly replaced with an official photo shared by President Trump. NewsGuard, which monitors the reliability of online information, tracked five fabricated images and two misrepresented videos that collectively drew more than 14.1 million views on X in under two days.

The Times tested a dozen AI generators and found most tools, including Google’s Gemini, OpenAI’s ChatGPT, and X’s Grok, quickly created fake arrest images despite stated policies against misleading content.

Jeanfreddy Gutiérrez, who runs a fact-checking operation covering Venezuela, said the fakes spread through “almost every Facebook and WhatsApp contact” he has before official images were available.

“It just took a lot of work, because we always lose the battle to convince people of the truth,” Gutiérrez told the Times.

A pattern of failures

That battle is getting harder. The Maduro deepfakes emerged just days after Grok became the center of a global regulatory firestorm. Governments in the EU, UK, France, India, Malaysia, and Australia have all launched investigations after the chatbot began generating non-consensual sexualized images of women and minors at scale.

Bloomberg reported that Grok was generating thousands of “undressed” images per hour earlier this week. The official Grok account posted an apology on X, writing that it “deeply regret[s]” generating sexualized images of girls “estimated ages 12-16.”

If a major image generator can’t prevent the creation of child sexual abuse material, its safeguards against political deepfakes are likely just as porous.

Gutiérrez said many people refused to believe the official image of Maduro posted by Trump was real.

“It’s funny, but very common,” he told the Times. “Doubt the truth and believe the lie.”