OpenAI began testing ads in ChatGPT on Monday for users on Free and Go subscription tiers, marking a major shift for the world’s most popular AI chatbot. The move came hours after rival Anthropic ran Super Bowl commercials ridiculing the idea of ads in AI responses.

What do 1,000 journalists and PR pros know about AI that you don't? They took AI Quick Start, a 1-hour live class from The Media Copilot. 94% satisfaction. Find out how to work smarter with AI in just 60 minutes. Get 20% off with the code AIPRO: https://mediacopilot.ai/

Key Takeaways

- OpenAI began testing ChatGPT ads hours after Anthropic mocked them at the Super Bowl.

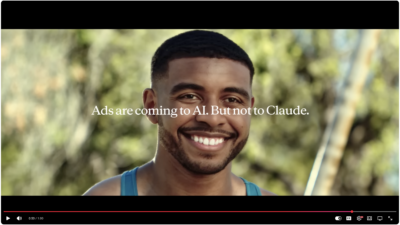

- Anthropic’s ads ended with “Ads are coming to AI. But not from us.”

- Underscores a widening fight over business models and “trustworthy AI.”

The timing underscores an escalating feud between the two AI companies over business models, safety practices and the future of artificial intelligence.

Anthropic’s Super Bowl ads showed glassy-eyed actors playing AI chatbots delivering advice alongside poorly targeted advertisements. Each commercial ended with “Ads are coming to AI. But not to Claude.” The spots directly targeted OpenAI’s January announcement that ChatGPT would include advertising.

OpenAI CEO Sam Altman responded on Twitter last week, calling the ads “clearly dishonest” and labeling Anthropic an “authoritarian company.” He defended the ad business as necessary to make free ChatGPT financially sustainable while covering development costs.

“More Texans use ChatGPT for free than total people use Claude in the US, so we have a differently-shaped problem than they do,” Altman wrote. He accused Anthropic of serving “an expensive product to rich people” and wanting “to control what people do with AI.”

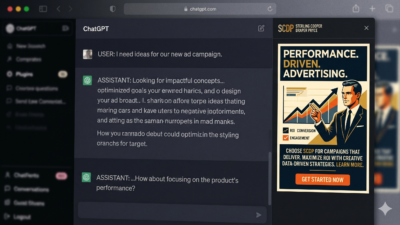

The ads began rolling out Monday to U.S. users logged into Free or Go accounts. The Go plan costs $8 per month and launched globally in mid-January. Paid subscribers to Plus, Pro, Business, Enterprise and Education tiers will not see ads.

OpenAI promises ads will not influence ChatGPT’s answers and that user conversations remain private from advertisers. In a blog post, the company says ads will be “clearly labeled as sponsored and visually separated” from responses, with targeting based on conversation topics, past chats and previous ad interactions.

Users researching recipes might see ads for grocery delivery or meal kits, OpenAI said. The company claims advertisers receive only aggregate performance data like views and clicks, not individual user information.

Ads will not appear for users under 18 or near sensitive topics including health, politics or mental health. Users can dismiss ads, view why they were shown, and manage personalization settings.

For newsrooms evaluating AI tools, the ad rollout raises questions about trust and influence. While OpenAI insists ads will not affect responses, the company needs revenue to sustain operations. Anthropic argues ads create incentives to optimize for engagement over helpfulness.

“The most useful AI interaction might be a short one, or one that resolves the user’s request without prompting further conversation,” Anthropic wrote in a press release last week.

The shift marks a reversal for Altman, who once called “ads-plus-AI” a “last resort” and “sort of uniquely unsettling.” OpenAI tested app suggestions that looked like ads in December, drawing backlash before announcing the formal ad program in January.

The OpenAI-Anthropic rivalry extends beyond business models. Anthropic co-founders Dario and Daniela Amodei are former OpenAI employees who frequently critique their former employer. Dario Amodei evangelizes about AI superintelligence risks, while Altman takes a more optimistic view. Employees from both companies reportedly back opposing super PACs on AI regulation.

The rivalry between the two companies has played out publicly before. When Anthropic released Claude Opus 4.6 with 1M token context earlier this month, it positioned the model as focusing on helpfulness over engagement metrics — a subtle dig at competitors pursuing ad-supported models.

Anthropic’s research has also criticized certain AI behaviors. The company studied 1.5 million conversations and found its chatbot exhibits “disempowerment” — being too agreeable and not pushing back when users make poor decisions. That research implicitly questions whether ad-supported models might amplify such behaviors to maximize engagement.