Any newsroom considering AI-powered comment moderation faces a fundamental question: what happens to the data? Comment sections generate streams of user-generated content, behavioral signals, and potentially identifying information. Handing that to a third-party vendor requires understanding not just what the system does, but how it handles everything flowing through it.

What do 1,000 journalists and PR pros know about AI that you don't? They took AI Quick Start, a 1-hour live class from The Media Copilot. 94% satisfaction. Find out how to work smarter with AI in just 60 minutes. Get 20% off with the code AIPRO: https://mediacopilot.ai/

Key Takeaways

- Utopia Analytics is a GDPR-compliant AI comment-moderation platform.

- Its security posture is solid, but publishers must configure it correctly.

- Review data retention and sub-processor terms before going live.

Utopia Analytics operates from Finland and markets itself as a context-aware moderation platform that learns each publisher’s specific standards. The system ingests comment text, conversation history, article metadata, and user behavior patterns to make automated publish/reject decisions. For the platform to work effectively, it must process substantial amounts of user data—and retain enough of it to continuously retrain its models.

The short verdict: Utopia’s GDPR foundation and EU hosting provide a stronger privacy baseline than many US-based alternatives. But publishers with strict compliance requirements will find gaps in publicly available security details that require direct vendor engagement to close.

Where Utopia presents risk

The primary concern stems from the nature of the service itself. Utopia’s AI models require training on historical comment data and ongoing access to new comments for retraining, typically every two weeks. Substantial user content flows through Utopia’s systems continuously.

Publishers must evaluate whether their comment sections contain personally identifiable information, sensitive political speech, or other content that elevates data handling risk. For publications operating in regions with strict data localization requirements, the Finnish hosting location may present compliance considerations—though EU hosting is generally favorable for GDPR purposes.

Technical details about encryption methods, access controls, data retention periods, and incident response procedures aren’t publicly specified. Trust and safety director Santiago Osorio notes that “both security and privacy are very important for the sort of clients we deal with” and describes a “scrutinized process of reviewing these aspects carefully with their legal teams.” Translation: expect these conversations during sales, not before.

Where Utopia delivers

The strongest point in Utopia’s favor is regulatory grounding. The company operates under GDPR, and Osorio states they “followed GDPR practices even before GDPR came into force.” This standard applies regardless of where clients are located, providing a baseline privacy framework for all deployments.

The company also positions itself around ethical AI principles, referencing the United Nations Universal Declaration of Human Rights and describing itself as “ethically sustainable.” That’s corporate values language rather than technical controls—but it signals organizational attention to responsible AI deployment in content moderation contexts.

For publishers comparing options, Utopia’s EU jurisdiction and proactive GDPR stance put it ahead of vendors operating from less privacy-forward regulatory environments.

The bottom line

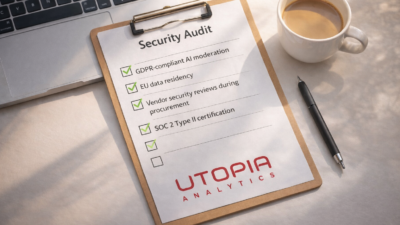

Utopia Analytics is a reasonable choice for publishers who:

- Need AI moderation and want a GDPR-compliant vendor

- Can accept EU data residency

- Have legal teams prepared to conduct vendor security reviews during procurement

Utopia may not be the right fit if you:

- Require SOC 2 Type II certification or equivalent third-party audits

- Need data residency outside the EU

- Operate under industry-specific regulations requiring detailed security attestations upfront

- Lack legal resources to conduct thorough vendor due diligence

Questions to ask before signing

- What specific data retention periods apply to comment content and user behavioral data?

- What encryption standards protect data at rest and in transit?

- What access controls limit who can view raw comment data?

- Is a Data Processing Agreement available with specific deletion provisions?

- What incident response procedures exist, and what notification timelines apply?

Contact Utopia Analytics at [email protected]. Engage your legal and security teams early—particularly if you operate across multiple jurisdictions or handle sensitive content categories.

Frequently Asked Questions

Utopia Analytics is a comment moderation and community management platform using AI to help publishers manage reader comments. It analyzes content for toxicity, spam, and policy violations—helping news organizations maintain productive comment sections without requiring large moderation teams. It’s particularly strong in Nordic languages and European news contexts.

Utopia Analytics processes reader comment data and associated metadata through its AI moderation systems. The platform operates under Finnish law with GDPR compliance. Publishers should request and review the full data processing agreement to understand exactly what comment data is processed, retained, and how it may be used beyond core moderation functions.

Utopia Analytics uses machine learning trained on news-specific comment data to detect toxic, hateful, and off-topic content with high accuracy. Its models can be customized for a publisher’s community standards. Most publishers report substantial reductions in moderation workload and measurable improvements in comment section quality after full implementation.

Utopia competes with Coral (open source, from Vox Media), Disqus, and Civil Comments. Its key differentiators are strong GDPR compliance, deep AI training on news-specific comment patterns, and particular strength in Nordic languages. Coral is a strong alternative for US newsrooms prioritizing open-source tools and community-building features.

Utopia Analytics serves publishers from regional news sites to major national outlets. It’s most valuable for newsrooms large enough to have active comment communities but too small to staff a full-time dedicated moderation team—automating the bulk of routine moderation while escalating edge cases and appeals to human editors.