For Proto Thema, one of Greece’s largest online publishers, reader comments were both an asset and a recurring headache. Open threads encouraged debate, drove return visits, and kept readers on the site longer. But anonymous participation also attracted a steady stream of abuse, off-topic arguments, and toxic exchanges that staff had to sift through comment by comment.

What do 1,000 journalists and PR pros know about AI that you don't? They took AI Quick Start, a 1-hour live class from The Media Copilot. 94% satisfaction. Find out how to work smarter with AI in just 60 minutes. Get 20% off with the code AIPRO: https://mediacopilot.ai/

Key Takeaways

- Greek publisher Proto Thema uses Utopia Analytics to automate comment moderation.

- The AI filters toxic content while preserving civil debate threads.

- Smaller newsrooms can now moderate at scale with a minimal team.

Journalists found themselves spending hours on work they neither enjoyed nor considered core to their jobs. Delays in manual review meant comments appeared long after articles were published, undermining real-time discussion and the engagement metrics advertisers care about. More than once, the newsroom weighed shutting comments down entirely.

Utopia Analytics offered a different option: an AI system trained on Proto Thema’s own moderation history that could shoulder most of the decision-making while staying within the outlet’s standards. The goal was not to outsource judgment entirely, but to ensure that human moderators focused on edge cases instead of every single submission.

The gist

Utopia’s deployment at Proto Thema shows how an AI-led approach can keep comments open without overwhelming staff.

- AI-powered moderation now handles 80–90 percent of comments automatically

- Journalists recovered about 80 percent of the time once spent on manual review

- Monthly comment volume tripled to roughly 250,000, with readers staying longer on site

How they did it

Proto Thema’s starting point was a familiar mix of high comment volume and limited moderation capacity. The newsroom allowed readers to post without logging in, which made participation easy but also opened the door to “many, many, many” inappropriate comments that staff had to catch after the fact.

Training on historical data: Utopia began by ingesting several months of Proto Thema’s accepted and rejected comments, along with the outlet’s moderation guidelines. That dataset allowed the AI to learn how the newsroom differentiated between acceptable debate and comments that should be blocked.

Building a context-aware model: Rather than relying on static keyword lists, Utopia’s system evaluates each comment in its broader environment—article topic, headline, whether it is a new comment or a reply, and up to six lines of conversation history. That makes it more effective at catching subtle insults, coded language, and seemingly neutral phrases that become problematic in specific contexts.

Setting confidence thresholds: Each comment receives a confidence score indicating how likely it is to pass moderation. Proto Thema started with conservative thresholds, allowing the AI to auto-approve or reject only the clearest cases while routing borderline comments to human reviewers. As trust in the system grew, thresholds were adjusted upward to increase automation.

Ongoing calibration: Utopia recommends regular check-ins during the early months of deployment. Proto Thema’s team reviewed false positives and false negatives, discussed edge cases, and refined policies so the model could be retrained on more precise examples of desired behavior.

Key numbers

Utopia’s implementation at Proto Thema produced a mix of time savings and engagement gains.

- Moderation time saved: Journalists gained back roughly 80 percent of the hours they had previously devoted to comment review, allowing them to focus on reporting and editing.

- Automation rate: Utopia’s AI now handles about 80–90 percent of moderation decisions automatically, reserving only complex or sensitive cases for human review.

- Comment volume: Monthly comments have approximately tripled since deployment, to around 250,000 per month.

- Audience behavior: Readers are spending longer on the site, with some visiting primarily to read and participate in comment threads.

What to watch for

Utopia’s materials emphasize that successful deployments still depend on clear policies and thoughtful oversight.

- Policy clarity: The AI’s performance is closely tied to the quality of the guidelines and historical decisions it is trained on; vague or inconsistent policies will produce uneven results.

- Edge cases and sensitive topics: Even with high automation, Utopia recommends maintaining 10–20 percent human review for breaking news, controversial subjects, and borderline comments that require editorial judgment.

- Moderator behavior: Analytics have revealed that some human moderators in other organizations simply “click accept, accept, accept” when paid by volume, a reminder that human oversight can drift without monitoring.

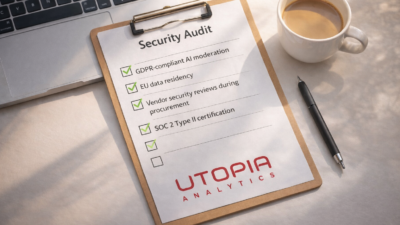

- Security and privacy detail: Public-facing materials offer limited specifics on technical controls; publishers still need to review contracts and documentation with their legal and security teams.

In Proto Thema’s case, Utopia turned moderation from an exhausting chore into a manageable part of daily operations. The comments section remains open, engagement is higher, and journalists spend more of their time on the work they were hired to do.

For publishers facing similar pressures, Utopia’s example suggests that AI-led moderation, when trained on local standards and paired with clear policies, can reclaim the value of the comments section without accepting the worst of what it can become.