The comments section remains one of journalism’s most vexing challenges. Done right, it builds community, drives return visits, and keeps readers on your site longer—metrics that matter to advertisers and subscription teams alike. Done wrong, it becomes a toxic swamp that alienates readers, exposes your brand to liability, and consumes editorial resources that should be spent on journalism.

What do 1,000 journalists and PR pros know about AI that you don't? They took AI Quick Start, a 1-hour live class from The Media Copilot. 94% satisfaction. Find out how to work smarter with AI in just 60 minutes. Get 20% off with the code AIPRO: https://mediacopilot.ai/

Key Takeaways

- Utopia Analytics uses AI to keep comment sections civil at scale.

- Newsrooms choose it for GDPR compliance and minimal moderation staff.

- Automated filtering reduces toxic content without killing open debate.

Most newsrooms have experienced this cycle firsthand. They launch comments with optimism, watch toxicity overwhelm their capacity to moderate, and eventually shut the whole thing down. Then the engagement metrics suffer, someone proposes bringing comments back, and the cycle repeats.

Utopia Analytics offers a way off this treadmill. The Finnish company’s AI-powered moderation platform handles the bulk of comment review automatically, freeing journalists to do their actual jobs while maintaining the kind of civil discourse that keeps readers coming back. Here’s why newsrooms are making the switch.

1. The AI understands context, not just keywords

Older moderation systems worked like spam filters—they maintained dictionaries of banned words and flagged anything that matched. Users quickly learned to substitute characters or use creative spelling, turning moderation into an endless game of whack-a-mole.

Utopia takes a fundamentally different approach. Its AI analyzes comments the way a human moderator would: considering the article topic, whether the comment is a reply to someone else, and the conversation history leading up to it.

The context-awareness extends to situational appropriateness. A comment like “this is the best thing that could happen” might be perfectly fine on most stories, but becomes problematic when posted under an article about a tragedy. The AI catches these distinctions because it’s trained to understand meaning, not just match patterns.

2. Custom models trained on your editorial standards

No two newsrooms have identical moderation policies. What’s acceptable on a sports blog might be out of bounds for a family newspaper. Utopia addresses this by building a custom AI model for each client, trained on that publication’s historical moderation decisions.

If you have three to six months of comment data with moderation decisions attached, Utopia can analyze your patterns and have a working model within two weeks. The system learns what your publication tolerates and what it doesn’t—then applies those standards consistently across hundreds of thousands of comments.

For publications launching comments for the first time, Utopia starts with a pre-trained large language model that catches obvious violations while your team moderates manually. Within two to three months, enough data accumulates to build your custom model. It’s not instant gratification, but it means the AI eventually reflects your specific editorial voice rather than some generic standard.

3. Time savings that actually change workflow

When Greek news publisher Proto Thema implemented Utopia, their journalists got back roughly 80 percent of time spent on moderating comments. That it represents hours per day that reporters and editors could now spend interviewing sources, writing stories, and editing copy instead of slogging through comment queues.

The platform handles 80-90 percent of comments automatically, with configurable confidence thresholds that determine when human review kicks in. Utopia recommends starting conservative; let the system prove itself before dialing up automation. But Utopia says most newsrooms reach 85-90 percent automation within six months of implementation.

This matters beyond simple efficiency. Manual moderation is, frankly, a miserable job. Nobody went to journalism school to spend their days reading toxic comments about politicians. When that burden disappears, staff morale improves and turnover decreases.

4. Actionable data on your community

Beyond moderation, Utopia provides analytics that inform editorial strategy. Monthly reports reveal which stories generate the most engagement, how publication timing affects audience interaction, and where toxicity clusters.

One insight for has proved particularly valuable: Roughly 60-70 percent of toxic content typically comes from just 3-4 percent of users. Identifying and removing these serial offenders dramatically improves the comment environment for everyone else. The data also catches human moderators who are phoning it in by accepting or rejecting comments en masse without actually reviewing them.

Proto Thema saw comments triple after implementation, reaching approximately 250,000 per month. More importantly, readers started staying on the site longer to engage with discussions. Some readers now come specifically for the comments, checking what people are saying about headlines without even reading the underlying articles.

5. GDPR compliance built in

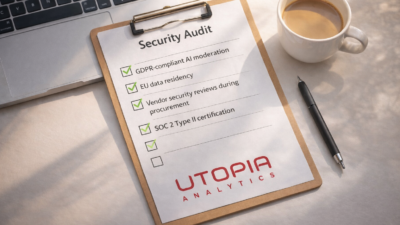

Utopia operates under the European Union’s General Data Protection Regulation, which means stringent privacy standards apply regardless of where your newsroom is based. The company followed GDPR practices even before the regulation took effect, according to their trust and safety team.

The platform also emphasizes ethical AI practices, basing its approach on the United Nations Universal Declaration of Human Rights. For newsrooms concerned about the ethical implications of automated moderation—and that should include most newsrooms—this transparency matters.

Who should consider Utopia

The platform makes most sense for publications that want active comment sections but lack the staff to moderate them manually. Pricing starts around $2,000 monthly for mid-sized newsrooms, scaling up for larger operations with higher comment volumes. That’s not cheap, but it’s considerably less than hiring dedicated moderation staff.

If you’re currently in the “comments are too much work” phase of the cycle, Utopia offers a path to maintaining engagement without the resource drain that made you shut things down in the first place.

Frequently Asked Questions

Utopia Analytics’ AI is trained specifically on news publisher comment data, making it more accurate at identifying problematic content in news contexts than general-purpose moderation tools. It understands the distinction between legitimate critical discussion of news topics and actual harassment—a distinction general AI moderators frequently get wrong.

Publishers using Utopia Analytics typically report that AI moderation handles 70-90% of moderation decisions automatically, with only edge cases and appeals requiring human review. For newsrooms previously spending hours daily on comment moderation, this represents significant staff time savings that can be redirected to reporting.

Utopia Analytics offers APIs and integrations for common commenting implementations and can connect to custom comment infrastructure. Publishers should check compatibility with their specific commenting solution during the evaluation process. It works best with newsrooms running their own comment systems rather than third-party hosted comment platforms.

Utopia Analytics has particular strength in Nordic languages—Finnish, Swedish, Norwegian, Danish—and major European languages, given its origins. For newsrooms in these regions, it offers more accurate moderation than tools trained predominantly on English content. Multilingual newsrooms should test accuracy in their specific languages before full deployment.

Utopia Analytics provides moderation dashboards showing removed comment rates by violation type, peak moderation times, comment volume trends, and community health scores over time. This data helps newsrooms tune their community standards, understand reader behavior patterns, and demonstrate the scale of their moderation work to leadership and funders.